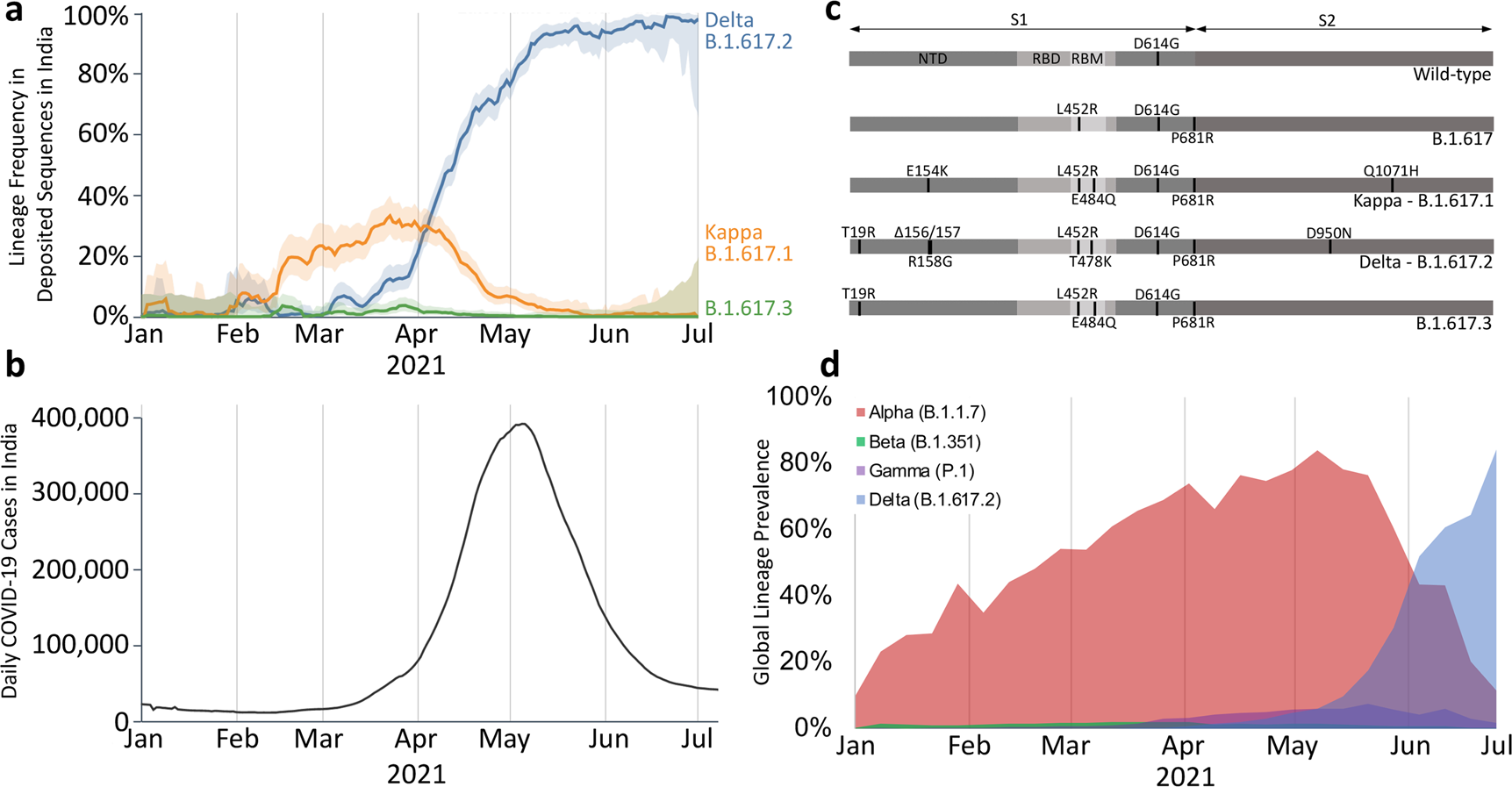

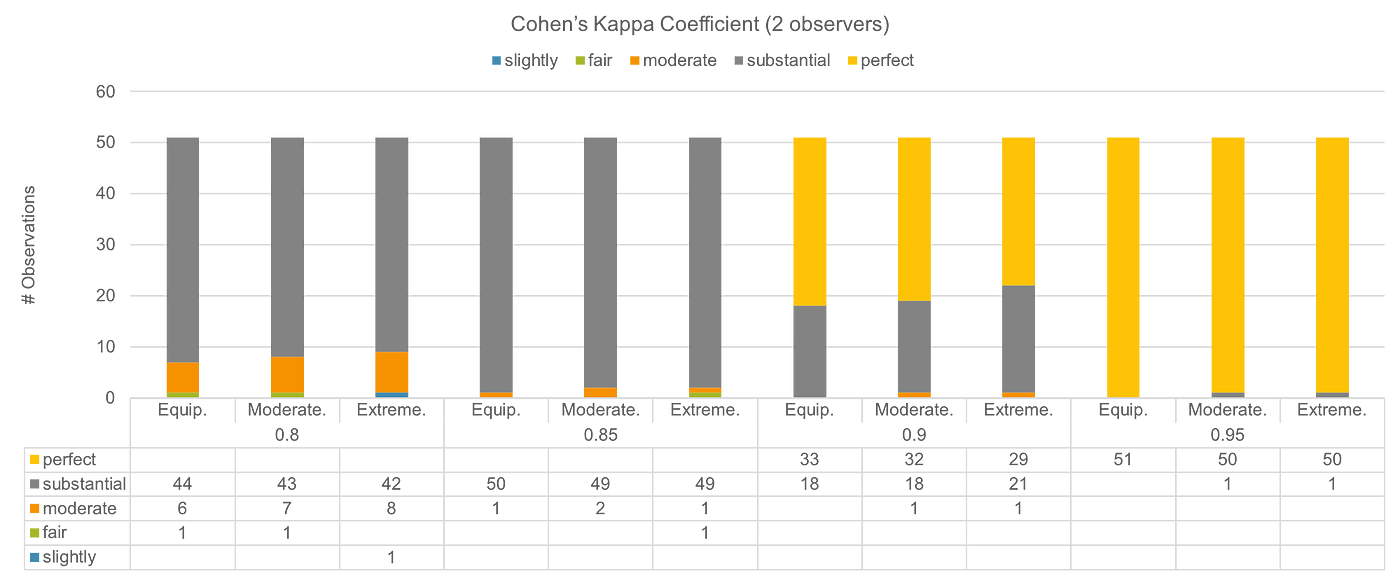

Confidence intervals for Kappa at the 95% confidence level for selected... | Download Scientific Diagram

A primer of inter‐rater reliability in clinical measurement studies: Pros and pitfalls - Alavi - Journal of Clinical Nursing - Wiley Online Library

Assessment of polytraumatized patients according to the Berlin Definition: Does the addition of physiological data really improve interobserver reliability? | PLOS ONE

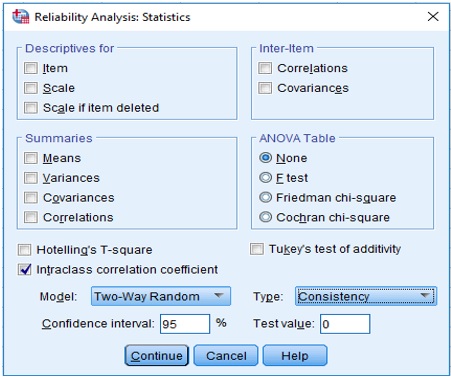

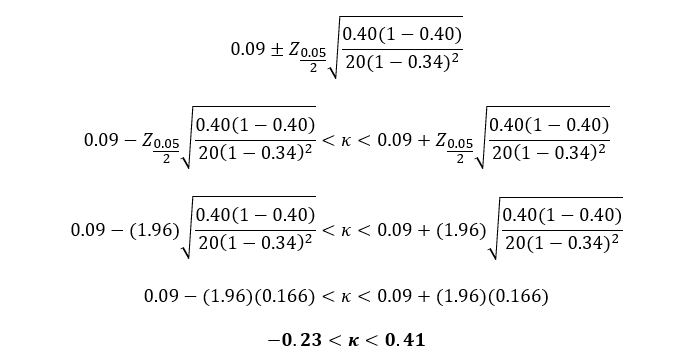

Interpretation of Kappa Values. The kappa statistic is frequently used… | by Yingting Sherry Chen | Towards Data Science

Intra-Rater and Inter-Rater Reliability of a Medical Record Abstraction Study on Transition of Care after Childhood Cancer | PLOS ONE

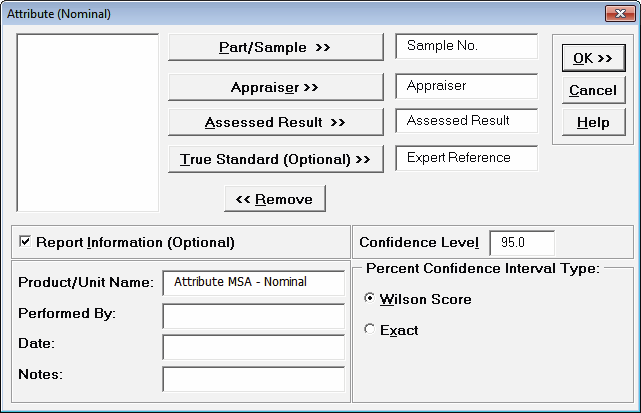

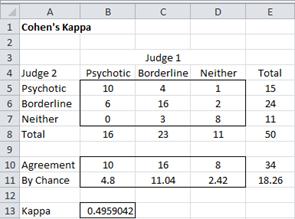

Cohen's Kappa and Fleiss' Kappa— How to Measure the Agreement Between Raters | by Audhi Aprilliant | Medium

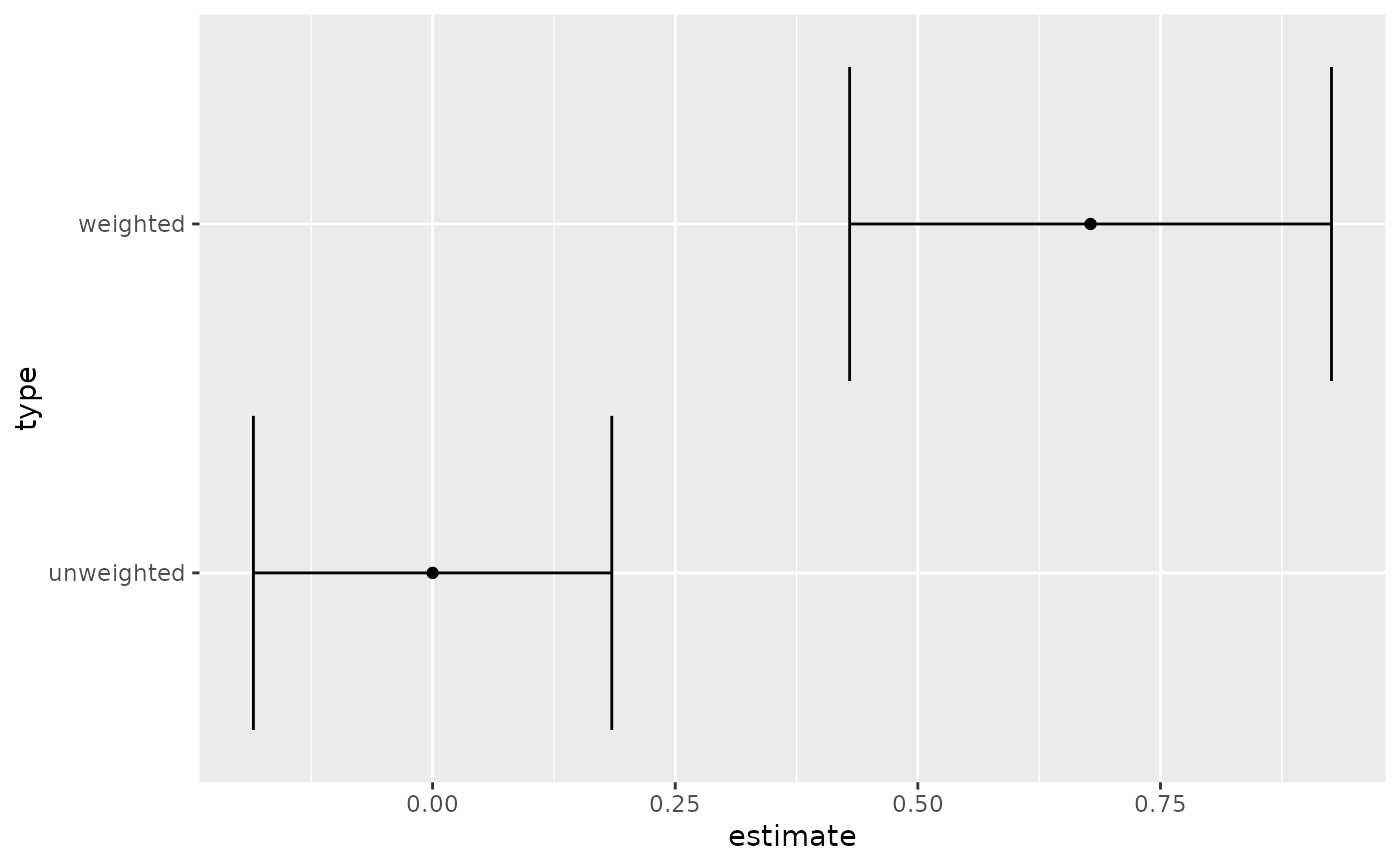

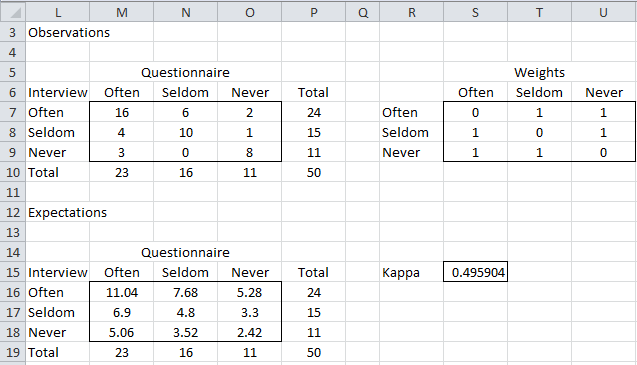

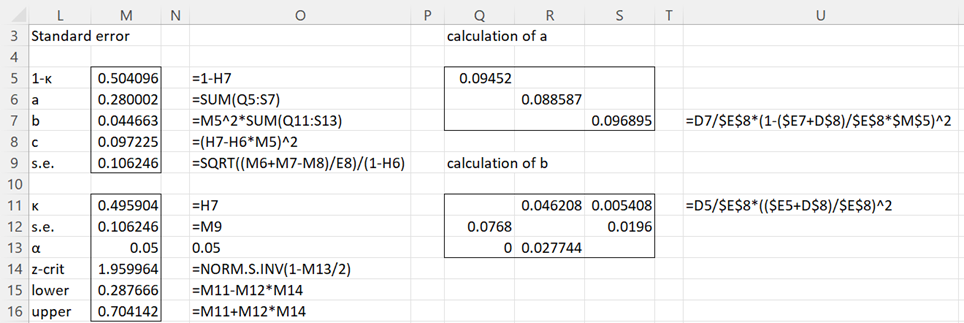

PDF) Measuring inter-rater reliability for nominal data - Which coefficients and confidence intervals are appropriate?

Confidence intervals for Kappa at the 95% confidence level for selected... | Download Scientific Diagram